In the lead-up to the 2018 midterm elections, more than 10,000 automated Twitter accounts got caught conducting a coordinated campaign of tweets to discourage people from voting. These automated accounts may seem authentic to some, but a tool called Botometer was able to identify them while they pretentiously argued and agreed, for example, that “democratic men who vote drown out the voice of women.” We are part of the team that developed this tool that detects the bot accounts on social media.

Our next effort, called BotSlayer, is aimed at helping journalists and the general public spot these automated social media campaigns while they are happening.

It's the latest step in our research laboratory's work over the past few years. At Indiana University's Observatory on Social Media, we are uncovering and analyzing how false and misleading information spreads online.

One focus of our work has been to devise ways to identify inauthentic accounts being run with the help of software, rather than by individual humans. We also develop maps of how online misinformation spreads among people and how it competes with reliable information sources across social media sites.

However, we have also noticed that journalists, political campaigns, small businesses and even the public at large may have a better sense than we do of what online discussions are most likely to attract the attention of those who control automated propaganda systems.

We receive many requests from individuals and organizations who need help collecting and analyzing social media data. That is why, as a public service, we combined many of the capabilities and software tools our observatory has built into a free, unified software package, letting more people join our efforts to identify and combat manipulation and misinformation campaigns.

Combining different tools

Many of our tools allow users to retrospectively query and examine our collection of a 10% random sample of all Twitter traffic over a long period of time. A user can specify keywords, hashtags, user mentions, locations or user accounts they're interested in. Our software then collects the matching tweets and looks more deeply at their content by extracting links, hashtags, images, movies, phrases and usernames those tweets contain.

Our trend analysis app looks at how closely that suspicious content trends together. Our network analysis app shows how ideas spread from user to user. Our map app checks the geographical pattern of suspicious activities around important topics.

Our Botometer app then detects how likely it is that elements of the online discussion are being coordinated by a group of automated accounts. Rather than reflecting an authentic discourse of real people, these accounts may in fact be controlled by a person or an organization. These accounts usually act together, with some of them tweeting propaganda or disinformation, and others agreeing and retweeting, forming an inauthentic discourse around them to attract attention and draw real people into the online discussion.

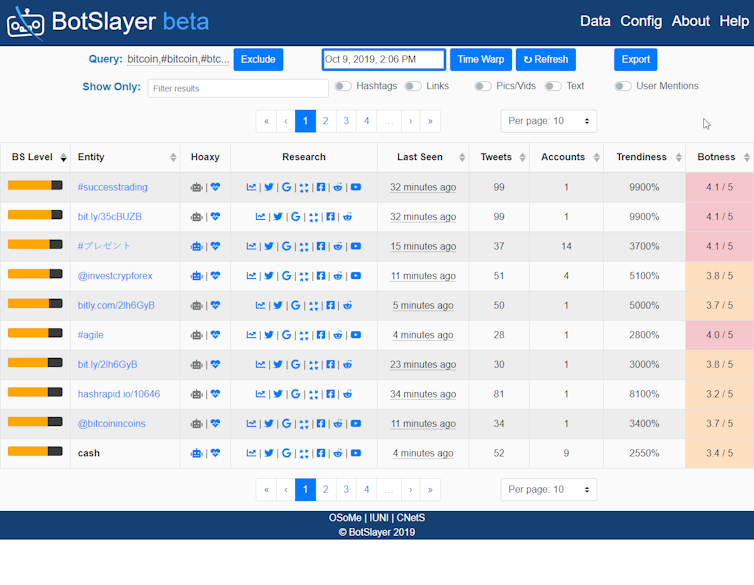

BotSlayer brings all the pieces together, letting a person using it do all those analyses with the entire flow of Twitter traffic.

BotSlayer's system collects all matching tweets – not just a sample – and saves them in a database for any retrospective investigation. Its web interface, in one screen, shows users in real time the terms and keywords that are part of suspicious activity around their interests. Users can click on icons to search for related information on various websites and social media platforms to look for related malicious efforts elsewhere online.

For example, during the 2018 U.S. midterm election, many bot accounts that were reported on Twitter were also found to be related to Facebook bot accounts with similar profiles.

BotSlayer also provides links to our Hoaxy system, which shows how Twitter accounts interact over time, identifying which accounts are the most influential, and most likely to be spreading disinformation.

Proving useful already

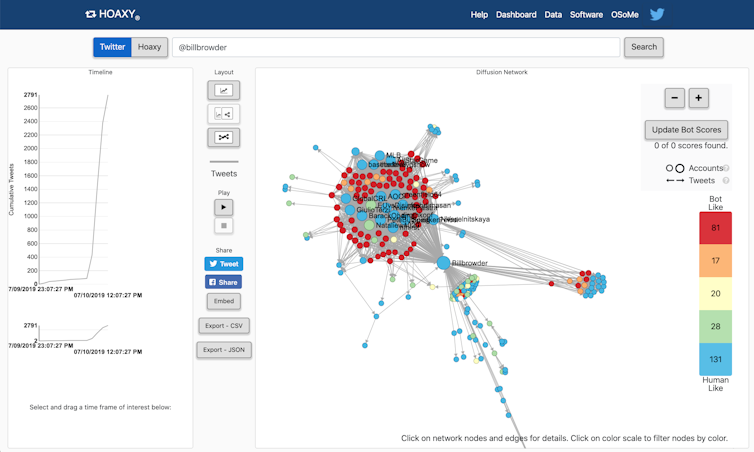

On July 10, 2019, one of our BotSlayer systems, focusing on Twitter activity about U.S. politics, flagged suspicious activity for us to investigate. The system noticed the appearance of a large group of tweets, mostly from brand-new Twitter accounts whose names ended with a string of numbers – like @MariaTu34743110. Those are clues that their activity may be generated by a bot.

They were posting and retweeting links to a single YouTube video attacking a financier named Bill Browder, who has been at the center of a dispute between the United States and the Russian Federation. That shared focus is a clue that all the accounts were part of an interconnected system.

When we dug deeper, we identified more than 80 likely bots coordinating with each other to try to boost widespread attention to Browder's alleged wrongdoing using the video on YouTube.

Plenty of other uses

Other coordinated campaigns have promoted financial scams, often seeking to sell questionable investments in cryptocurrencies. Scammers have impersonated internet celebrities like entrepreneur Elon Musk or software magnate John McAfee.

These accounts are a bit more sophisticated than political-attack bots, with one lead account typically announcing that users can multiply their riches by transferring some of their cryptocurrency into the scammer's digital wallet. Then other accounts retweet that announcement, in an effort to make the scheme seem legitimate. At times they reply with doctored screenshots claiming to show that the scheme works.

So far, several news, political and civic organizations have tested BotSlayer. They have been able to identify large numbers of accounts that publish hyperpolitical content at a superhuman pace.

The feedback from testers has helped us make the system more robust, powerful and user-friendly.

As our research advances, we will continue to improve on the system, fixing software bugs and adding new features. In the end, we hope that BotSlayer will become a sort of do-it-yourself toolkit enabling journalists and citizens worldwide to expose and combat inauthentic campaigns in social media.

[ Deep knowledge, daily. Sign up for The Conversation's newsletter. ]![]()

Pik-Mai Hui, Ph.D. Student in Informatics and Network Science, Indiana University and Christopher Torres-Lugo, Ph.D. Student in Computer Science, Indiana University

This article is republished from The Conversation under a Creative Commons license. Read the original article.